Snowflake continues to evolve at an impressive pace, and the introduction of Database Change Management (DCM) Projects is a meaningful step toward bringing structured CI/CD practices closer to the data platform.

For many teams, this is a welcome shift.

Historically, managing change in Snowflake has meant stitching together SQL scripts, orchestration pipelines, and external tooling just to move from development to production in a controlled way. DCM Projects begin to simplify that by introducing a declarative, project-based approach to managing Snowflake objects, along with a PLAN before DEPLOY workflow that provides visibility into changes before they are applied.

This is real progress. It shows Snowflake is investing in modern engineering practices like declarative configuration, version control, and controlled deployment directly within the platform.

At the same time, it raises an important question for teams thinking beyond initial adoption: is Database Change Management the same thing as enterprise CI/CD for data?

Not quite.

A strong foundation for managing change

DCM Projects introduce capabilities that align well with how modern systems are built. Defining desired state through project files and templates is a significant improvement over manually executed scripts. The PLAN step reduces deployment risk by showing exactly what will change before execution, and the alignment with Git-based workflows reinforces the expectation that Snowflake development should follow the same lifecycle discipline as application code.

Snowflake is also beginning to extend this model into data pipelines, including support for testing and expectations that allow teams to validate data as part of the deployment lifecycle.

Taken together, these capabilities provide a much stronger foundation for managing database changes in Snowflake. For individual developers and smaller teams, this can immediately improve consistency and reduce friction in day-to-day work.

But managing database changes, even when done well, is only one part of a much larger challenge.

The “Terraform for Snowflake” moment

A common way people are beginning to describe DCM Projects is “Terraform for Snowflake,” and the comparison is understandable.

The ability to define databases, schemas, warehouses, roles, grants, and tables declaratively in a small number of files is powerful. Instead of writing imperative DDL, teams define their desired state and let Snowflake calculate the difference. PLAN shows the exact changes; DEPLOY applies only those differences.

In practice, this can feel transformative. You can stand up a complete Snowflake environment without manually writing deployment scripts. Changes become as simple as updating a definition file and applying the diff. Drift is reduced, and consistency improves.

That experience is a meaningful step forward for Snowflake practitioners.

However, it’s important to recognize what this capability represents. It is infrastructure and database change management done well. It is not, by itself, the full scope of enterprise CI/CD or DataOps.

The difference between change management and DataOps

Database Change Management focuses on defining, tracking, and deploying changes to database objects. Enterprise data delivery operates at a broader level.

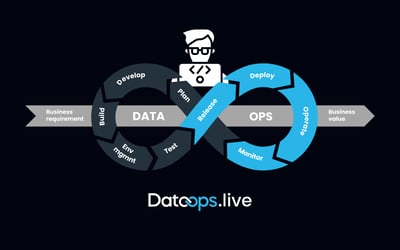

CI/CD in the context of data is not just about deploying objects. It’s about ensuring that those changes produce trusted, governed, and reliable data outcomes across the entire lifecycle. That includes how pipelines are orchestrated, how transformations are validated, how dependencies are managed, and how data is monitored once it is in production.

It also includes understanding whether the data produced by those pipelines is correct, complete, and usable for downstream analytics and AI workloads.

This is where CI/CD evolves into something more comprehensive. It becomes DataOps, an operating model that spans how data is built, tested, deployed, and in practice requires automation to execute continuously at scale.

Where the Terraform analogy breaks down

Comparing DCM Projects to Terraform is useful, but only to a point.

Terraform manages infrastructure state. DCM Projects manage Snowflake object state. Enterprise data delivery, however, requires coordination across multiple layers that extend beyond either of those.

Even in scenarios where a full Snowflake environment can be defined with only a few files, several practical questions still need to be addressed.

- How are deployments triggered across environments?

- How are multiple teams coordinating changes to shared assets?

- How are transformations tested beyond structural validation?

- What happens when a deployment fails?

- How are changes rolled back or audited?

- How is runtime behavior monitored once pipelines are active?

These are not shortcomings of DCM Projects. They are simply outside the scope of what database change management is designed to solve.

The “Now What?” Reality

DCM Projects provide a structured way to define and deploy Snowflake resources, but they do not eliminate the need for a broader delivery process.

Snowflake’s own guidance reflects this, positioning DCM Projects as part of a workflow that can integrate with Snowflake CLI and existing CI/CD tooling. In practice, most organizations will still need to build or integrate additional layers to manage environment promotion, testing strategies, governance enforcement, and operational monitoring.

Over time, these layers often evolve into a collection of pipelines, scripts, and integrations that require ongoing maintenance. What starts as a flexible approach can gradually introduce complexity, especially as more teams and use cases are added.

The Narrative Taking Shape and Where It Needs More Context

There is a growing narrative in the community that Snowflake now has CI/CD because it now has declarative deployment, PLAN and DEPLOY workflows, and pipeline support.

That conclusion is understandable. The capabilities are real, and they represent meaningful progress.

However, many of the examples being shared focus on how to use DCM Projects to manage and deploy Snowflake objects, not on what it takes to operationalize those capabilities at enterprise scale.

Moving from a working example to a production operating model introduces additional considerations. Teams need to coordinate across domains, enforce standards consistently, expand testing beyond basic expectations, handle failures and rollbacks, and integrate observability across pipelines and data products.

These are not gaps in DCM Projects. They’re just the broader realities of enterprise data delivery.

Why DataOps Matters Even More for AI

As organizations accelerate their AI initiatives, the importance of this distinction becomes even more critical.

AI systems are highly sensitive to data quality, consistency, and governance. The success of those systems depends less on the sophistication of the model and more on the reliability of the data feeding it.

AI-ready data must be consistent across environments, validated through continuous testing, governed through policy, observable in production, and reproducible across deployments.

Achieving that level of reliability requires more than managing database changes. It requires an end-to-end approach to how data is delivered and operated.

From Features to Platforms

This is why the conversation is shifting from individual features to integrated platforms.

A feature solves a specific problem. A platform brings together multiple capabilities into a cohesive system that operates across the lifecycle.

In the context of data, that system includes automated CI/CD, testing, and governance, in addition to observability, and collaboration, all working together. DCM Projects contribute meaningfully to this system by providing a native way to manage and deploy Snowflake objects using modern practices.

They are a strong building block, but they are not the entire structure.

The Role of Agentic AI

As AI becomes more integrated into development workflows, generating code is becoming easier. Delivering that code safely into production is not.

This is where agentic AI plays a different role. Instead of simply generating Snowflake SQL or pipelines, it participates in the delivery lifecycle by understanding dependencies, validating changes, expanding test coverage, and helping enforce standards.

At DataOps.live, this is reflected in Metis, an agentic AI DataOps engineer designed to accelerate development while maintaining governance and control. It acts as a force multiplier, helping teams move faster without introducing additional risk.

Building on Snowflake and Extending It to Scale

From a practitioner’s perspective, the goal isn’t to replace what Snowflake is building. It’s to extend it.

DCM Projects is a meaningful step forward. It improves how database changes are defined, tracked, and deployed, and they bring Snowflake closer to the development patterns data engineers have been asking for.

But as teams move beyond individual use cases and begin operating across multiple environments, pipelines, and domains, the challenge shifts. It becomes less about how changes are deployed, and more about how those changes are consistently operationalized across the full lifecycle.

That includes coordinating deployments across environments, enforcing testing and governance standards, managing dependencies between pipelines, and maintaining visibility into how data behaves once it is in production.

This is where a DataOps automation platform comes into play. It builds on top of capabilities like DCM Projects and brings together deployment, testing, governance, orchestration, and observability into a unified, repeatable lifecycle.

The result is not just faster deployments. It is the ability to deliver data products consistently, reliably, and at scale, without requiring teams to build and maintain that operational layer themselves.

Snowflake continues to strengthen the foundation. Extending that foundation into a complete, enterprise-ready delivery model is the next step.

Delivering change is progress. Delivering trusted data at scale is the goal.

With DataOps.live, teams can extend Snowflake into a complete delivery model, unifying CI/CD, testing, governance, and observability into a single, repeatable lifecycle.

Start your 30-day free trial of DataOps.live and see how to operationalize enterprise DataOps without building and maintaining the platform yourself.

By

Keith Belanger

By

Keith Belanger